The Chaos Management Skills Assessment Series: A Test Translation Case Study Episode 4 – Linguistic Quality Assurance and Optical Check

by Steve Dept, cApStAn CEO

Readers of this informative series on good practice in test translation are aware that the Chaos Management Skills Assessment is a fictitious project, which we set up for the exclusive purpose of the series. The purpose is to illustrate the complexity of test translation and the added value of a robust linguistic quality assurance design. This is the 4th issue and final issue in this series, and it takes a closer look at the components of linguistic quality assurance that take place after the actual translation, including the final optical check, particularly important in computer-delivered tests.

Our imaginary Chaos Management Skills Assessment (CMSA) measures competencies in consultants who assist companies in damage control situations. If you read the first 3 episodes, you will know that in this project we were fortunate enough to be given the opportunity to implement good practices. There were preliminary meetings between the test delivery platform engineers and cApStAn’s translation technologists (Episode 3). Translation memories were generated from existing translations (Episode 1) of a previous cycle of the test. There was a translatability assessment (Episode 2), and clear item-by-item translation and adaptation notes were developed. There was a robust translation workflow, and good tools were used. Is that enough to ensure that the 12 target language versions of the test are fair, reliable and valid? Perhaps it is. It should be. However, one can’t be sure: no test translation process is complete without linguistic quality assurance and equivalence checks.

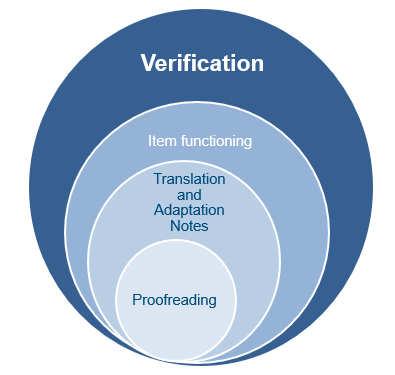

We set up a two-tier linguistic quality assurance design: a translation verification and a final optical check. The scope of verification is broader than a linguistic review. Proofreading skills are but a modest subset of the skills deployed by a verifier, who needs to strike the right balance between faithfulness to the source and fluency in the target version.

So, what is Translation Verification?

Trained verifiers focussed on linguistic equivalence, which is a proxy for functional equivalence. They performed a thorough, segment-by-segment equivalence check between the target version and the source versions of the test items and used cApStAn’s set of 14 verifier intervention categories to report each case where equivalence is threatened. The categories are used so that verifiers report on the issues in a standardised way. For example, a literal match between a stimulus and an item was lost in the Russian version. The verifier selected the “Matches and Patterns” category and implemented the suggested correction directly in Russian the CAT tool—in this case, she restored the literal match—and described the rationale of the intervention in English in a dedicated comment field. This allowed us to generate reports on the type of issue reported in each language version, but also in each item across all language versions. Part of the verifier’s brief was to report on compliance with each item-by-item translation note, so we also had statistics on that aspect.

Finally, when the verified translation was ready, the XLIFF files were imported back into the test delivery platform, and a final optical check was performed: the verifier looked at each screen, saw what the testee would see, and checks that all imports appeared correctly, that no label was truncated and that the translated version was ready for production. Time to go live!

Meanwhile, if you’d like to learn about how we can help you with your test localization projects, do fill the form below, and we’ll get back to you as soon as we can.