The Chaos Management Skills Assessment Series: A Test Translation Case Study Episode 1 Retrieving and leveraging existing translations

by Steve Dept, cApStAn CEO

Please don’t spend too much time looking up the Chaos Management Skills Assessment. It is a fictitious project, which we set up for the exclusive purpose of this informative series on good practice in skills assessments translation. We have collated events and features from several multilingual assessments on which we have worked with reputable testing organisations. The purpose is to illustrate the complexity of test translation and the added value of a robust linguistic quality assurance design. We plan to send out 4 consecutive issues in this series, and this is the initial offering.

Our imaginary Chaos Management Skills Assessment (CMSA) measures competencies in consultants who are routinely called in to help companies in damage control situations. This is the second assessment cycle, and part of the items could be recycled from the previous cycle. The test is administered in 12 different languages. In the first cycle, the test was administered in 6 of those languages. It is agreed to have the new assessment items translated by professionals.

The CMSA Programme Manager asked cApStAn what could be done to produce valid and reliable skills assessments translation and to maximize comparability across languages and over time in this multilingual assessment. In this context, we also investigated whether translated content from the first cycle could be leveraged.

Analysis of existing source and translated items

First, we examined content that remained the same across the two CMSA assessment cycles, which we refer to as trend content. Before into production with cycle 2, the CMSA test developers chose to revise the items for which they thought the wording could be improved. Perhaps the wording did improve, but what seemed to be minor revisions in the English master version called for more extensive edits in the existing test translations from the first cycle. As the International Association for the Evaluation of Educational Achievement (IEA) famously put it: if you want to measure change, don’t change the measure. Fine-tuning the master version of a link item can lead to new meaning shifts in translated versions, and there is a risk of losing the trend.

Translation and Adaptation notes

Second, we looked at what test translation notes could be shared with the linguists. The initial plan was to send the new master version in the form of an Excel spreadsheet. There were tags for fills, placeholders and routing. We recommended (i) to encapsulate those tags so that the translators could concentrate on text; (ii) to prepare a style guide covering e.g. gender neutrality and forms of address; (iii) to generate a glossary with recurring terms and expressions; (iv) to draw up item-by-item translation and adaptation notes; and (v) to clearly distinguish new materials from items that were retrieved from a previous cycle, for which there are existing translations.

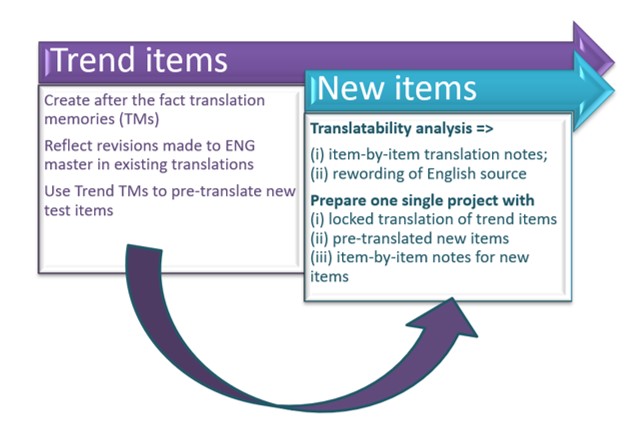

This led to a two-pronged approach for trend content vs new content, as you can see in the figure below.

The rule we applied was that, since existing translations had been used in the field to measure skills, the translations of new items should – as far as possible – use the same wording for prompts, the same form of address, the same recurring expressions as the trend items, as long as they were not outdated or blatantly incorrect. In multilingual assessments, cosmetic improvements of the wording should not give rise to systematic editing of trend items, as this can elicit different cognitive skills to answer the question and possibly affect the level of difficulty of the item.

In the next episode, we shall describe the translatability assessment and the process of drawing up item-by-item translation and adaptation notes in test translation.

Meanwhile, if you’d like to learn about different translation and adaptation designs for multilingual tests and assessments, fill the form below, and we’ll get back to you as soon as we can.