“Algospeak”, a new vocabulary that has emerged on TikTok as content creators try to get around algorithms and strict content moderation

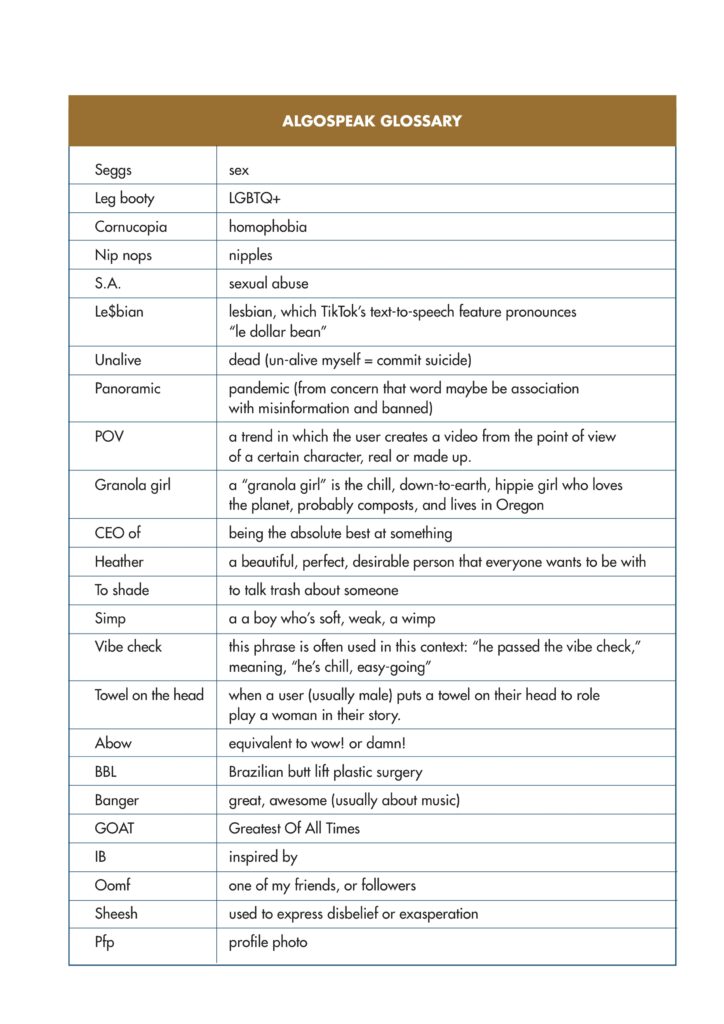

Seggs, leg booty, cornucopia, nip nops, S.A., D.V. and panoramic are just a few examples of a new vocabulary that is emerging on the TikTok social media platform, often referred to as “Algospeak”(see table below for the meanings). In a recent article for the The New York Times senior staff editor Melina Dukic writes that TikTok content creators have increasingly been coming up with substitutes for words because they worry about running afoul of TikTok moderation rules and being barred from posting. “Shadow banning” is a term used by people who believe their videos have been suppressed from view because they touched on topics the platform does not like. Critics say that TikTok is too aggressive in its moderation; the platform, for its part, claims a firm hand is needed in a freewheeling online community where plenty of users do try to post harmful videos. Some 113 million videos were taken down from April to June 2022, 48 million of which were removed by automation, the company states in its latest TiKToK guidelines enforcement report, dated September 2022 (see footnote 1). Is TikTok’s content moderation policy effective, fair, free of bias? Dukic says that while much of the content removed has to do with violence, illegal activities or nudity, many violations of the rules seem to dwell in a grey area.

Limitations of content moderation based on algorithms

Faithe J Day holds a B.A. in English and Digital Humanities and a PhD. in Communication Studies and is Assistant Professor in the Department of Black Studies at the University of California, Santa Barbara (UCSB). In an article for Medium she says that “the irony of using algorithms to police the language of users is that algorithms do not speak the way humans do”. Even with all of the artificial intelligence and machine learning models that have been developed, she adds, computers still take a very literal approach to language, which is easily subverted by human users’ development and deployment of coded language. The kind of “doublespeak” being used includes abbreviations, euphemisms, and even omissions and pauses, where audience members are meant to fill in the blanks using context clues, making it easier for users “to fly beneath the radar” of algorithms that flag videos containing certain words, tags, or popular talking points. Additionally, as algorithms are trained with information and data provided by humans they tend to reproduce existing biases in society.

Potentially dangerous uses of TikTok “Algospeak”

Despite the fact that online censorship exists in order to decrease the amount of unsafe or inappropriate video content there are many examples of how words and phrases are created and used in a way that allows for the growth of subversive or underground communities, warns Faithe J Day. Examples include the rise of incels (involuntary celibates), “anti-vax” conspiracists and pro-ana groups (the word “ana” is a stand-in for anorexia). Many of these communities reflect problematic beliefs, but their posts are able go undetected by the algorithms “before their language use gains traction and/or critique within the public sphere”.

Why human overseers are necessary for content moderation

The impact that censorship algorithms have had on TikTok culture and content demonstrates the importance of human overseers when it comes to the content that is allowed on a social media platform, says Faithe J Day. “Human overseers can do the work of ensuring the decisions made by machines reflect the realities of how a human would respond to that same content”, she adds. They analyse the machine’s reliability. At the same time, human oversight does not always make things easier for intersectionally marginalized communities, based on the way that specific cultures and traditions are interpreted by those outside of those communities. “Therefore, it will be important to not only train algorithms to better understand humans, but to also train the humans who oversee algorithms to have a better understanding of different cultures and language use”.

What the algorithms are not picking up

Despite the fact that TikTok continues to censor content about topics of racial justice and social equity, it rarely censors popular music or sounds (which can be full of inappropriate and graphic language and imagery in English and many other languages), notes Faithe J Day. In this sense, she adds, while topics such as Black Lives Matter and Gender Affirmation may underperform on the site, as the algorithm can block those topics from appearing (see footnote 2 below), songs about topics that break the platform’s rules can go undetected e.g. while a user would not be able to talk freely about marijuana or other drugs, songs about Mary Jane or dances or trends which reference partying and drug use can remain on the platform.

New TikTok vocabulary not related to censorship issues

In many cases, TiKTok users are just having fun and being creative, rather than worrying about having their videos removed or being barred from posting. POV, granola girl, CEO of, heather, to shade, simp, vibe check and towel on the head are some examples of such new terms. According to Axis, an online parent community “these Gen Z slang words and phrases signify belonging and membership to many TikTok users”. Axis provides resources and even a “Culture Translator” to help parents and caring adults understand their teenagers. More examples are listed in a recent article by Flowbox, an award-winning company which helps brands leverage and distribute social content: abow, BBL, banger, GOAT, IB, oomf, Sheesh, Pfp (see table below for meanings).

Will these words end up sticking around?

Probably not most of them, experts say. As soon as older people start using online slang popularised by young people on TikTok, the terms “become obsolete,” says Nicole Holliday, an assistant professor of linguistics at Pomona College, quoted in the NYT article. “Once the parents have it, you have to move on to something else.” The degree to which social media can change language is often overstated, she adds. Most English speakers do not use TikTok, and those who do may not pay much attention to the neologisms. Only time will tell!

Footnotes

(1) These are the topics which are addressed in the TikTok Guidelines: Minor safety, dangerous acts and challenges, suicide, self-harm, and disordered eating, adult nudity and sexual activities, bullying and harassment, hateful behaviour, violent extremism, integrity and authenticity, illegal activities and regulated goods, violent and graphic content, copyright and trademark infringement, platform security, Ineligible for the For You feed.

(2) Casey Fiesler, from the University of Colorado, quoted in an MIT article about glitches in TikTok content moderation, says that some content is getting flagged because they are someone from a marginalized group who is talking about their experiences with racism. “Hate speech and talking about hate speech can look very similar to an algorithm.” Those who are disproportionately targeted for abuse end up being algorithmically censored for speaking out about it.

See also our articles on

“When the evolution of a language is driven by political dissent: the example of Chinese hot words”

Sources

“Leg Booty? Panoramic? Seggs? How TikTok Is Changing Language”, Melina Delkic, The New York Times, November 19, 2022

“TikTok Is the Latest Social Network to Reshape Language”, Tobias Carroll, Inside Hook, November 20, 2022

“31 Gen Z/TikTok slang, expressions and acronyms you need to know in 2022”, Flowbox, April 20, 2022

Are Censorship Algorithms Changing TikTok’s Culture? How social media users push back against the policing of speech”, Faithe J Day, Medium, December 11, 2021

“Welcome to TikTok’s endless cycle of censorship and mistakes”, Abby Ohlheiser, MIT Technology Review, July 13, 2021

“The Ultimate Guide to TikTok Slang 2021”, Axis, January 05, 2021

“Tik Tok slang words and phrases you need to know”, Oxford International English School

Photo credit

Photo by Solen Feyissa on Unsplash