Garbage in, garbage out: feed AI translatable items

by Steve Dept – cApStAn partner

In some areas, AI may be a hype. Not so for neural machine translation (NMT). On a daily basis, we hear and read about the new threats and opportunities that come with new advances in artificial intelligence (AI). In the field of translation, the advances are real, the opportunities are “more, faster, more consistent” and the threat is “a new level of fluency that does its best to hide mistranslations”.

Let’s first clarify the concepts:

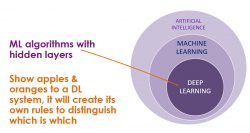

• AI is an umbrella term for (developing) a computer’s ability to perform and resolve tasks that are generally thought to be rather easy for a human brain but really hard for a computer.

• “Machine Learning” (ML) is a subset of AI. ML is predictive: one feeds input data into algorithms that are programmed to use statistics and predict an output value (within an acceptable range). In translation, ML is used to identify recurring patterns in the human translator’s choices, which will help the system predict a higher likelihood for one possible translation versus another.

• “Deep learning”, finally is a subset of ML: these are ML algorithms with hidden layers. The programmers cannot predict how exactly the computer will use these. In an image recognition programme, for example, one just labels a 1000 pictures of oranges as “orange” and a 1000 pictures of apples as “apple” and the DL system will create its own rules to distinguish which is which, while in standard Machine Learning, the programmers write the rules that the computer uses to identify/recognise what is on the picture.

• NMT draws on DL to make decisions: it uses input such as lots of bilingual data to create its own fluency rules.

For some language combinations and some domains, the result produced by some engines is sometimes impressive.

When it comes to test material disambiguation is key. Without help, the machine cannot infer from the context whether a “Dutch teacher” actually teaches Dutch or is merely a Dutch citizen who teaches any subject.

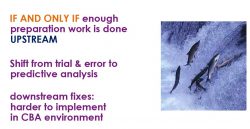

With a well-designed workflow that combines human expertise and NMT, it is technically possible to produce multiple language versions of test item banks faster and at a more affordable price than 3 years ago. If that is what you want to strive for, certain stages cannot be skipped.

The key word is “upstream”. What eats up a lot of time and resources is adding multiple reviews to reviews. Reviews by linguists, subject matter experts, proofreaders, stakeholders, censors, end users, bilingual staff at the client. If there good preparation work before the translation begins, there can be more automation and less reviews during and after translation. More preventive linguistic quality assurance, less corrective action.

Many translation professionals acknowledge that AI has boosted their productivity in recent years: it is when there is little leverage from translation memories that the contribution of MT is the highest. Automated quality assurance checks increase consistency and perform repetitive tasks such as harmonizing quotation marks, checking whether all segments are translated, or checking adherence to a glossary.

Test developers will need to disambiguate source material and work together with linguists to prepare contextual elements for both human translators and MT engines to interpret. Test developers and linguists will have new and more exciting tasks, and a higher level of specialisation will be required from human contributors.

Item writers need assistance to engineer the source version to: make it suitable for translation into other languages and make it suitable for a man-machine translation workflow.

This assistance is exactly what cApStAn has to offer to the testing industry. You need to make sure AI is fed with high quality input to harvest quality output and leverages databases of known issues. High quality input refers to both the source version of the text and the previous, validated translations that are fed into the system.

New paradigms for source optimization

Interacting with linguists and cultural brokers to train your input data is the key concept. In this paradigm, “to train input data” is to trim it, to program rules, to create style guides, to compile glossaries, to prepare routing, filters, dynamic text, and contextual information that can be read and interpreted by man and machine.

The best approach is proactive and interactive. Spend some time and money reviewing and augmenting the source version together with linguists/culture brokers.

We can come to your campus and work with the item developers or we can set up webinars. This allows to diagnose issues and propose fixes BEFORE the (man-machine) translation workflow kicks in.

The interaction between psychometricians, cognitive psychologists and linguists:

• Instils new dynamics in item writing.

• Produces a corpus of item-by-item translation and adaptation notes.

Action points for the testing industry

Determine skills that item developers (and human linguists) need to acquire/ hone so that their expertise can effectively be woven into new man-machine (translation) workflows.

If they can work with experts to define the new skills that linguists will need (translation technology, natural language processing, discernment) and test linguists for these skills, they will have a new product that will be in high demand in the buoyant localization market.

It is high time to:

• Upgrade your item pools to translatable master versions, ready to input to AI.

• Train a good MT engine with high-quality translations.

• Enlist the help of experts to usefully supplement NMT in tomorrow’s Man-Machine Translation workflows.

If you do your homework before translation begins, you can catch the early train of tomorrow’s multilingual assessment.