Gender bias in machine translation

by Pisana Ferrari – cApStAn Ambassador to the Global Village

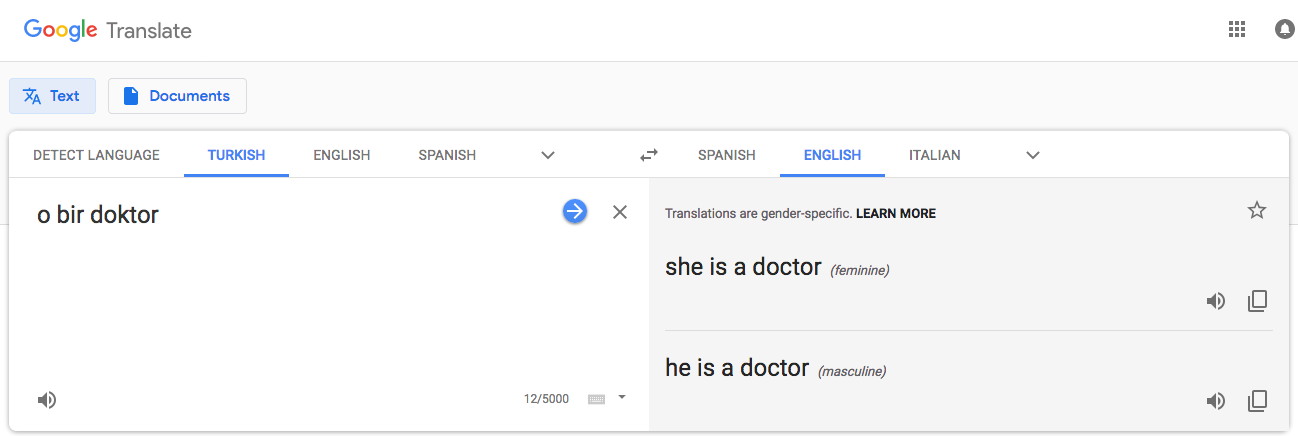

Do the footprints of stereotyping follow us in online environments? Yes, according to cApStAn linguist Emel Ince, and all the more so in the case of “gender-neutral” languages such as her own, Turkish, where there is no male or female distinction in the third person, and the pronoun “o” can be used for both. Translation of Turkish content resulted in:

O ilginc – He is interesting

O bir aşçi – She is a cook

O bir doktor – He is a doctor

Who is to blame? The programmers, the platforms, the text corpora fed into the system? Google has recently addressed the issue and announced that from now on there will be both a feminine and masculine translation for a single word, like “surgeon”, when translating from English into French, Italian, Portuguese, Spanish. And there will be both also when translating phrases or sentences from Turkish to English. “O bir doktor” will now be translated as both “she is a doctor” and “he is a doctor”. Up to now the Google platform provided only one translation per query, even if the translation could have either a feminine or masculine form. “So when the model produced one translation, it inadvertently replicated gender biases that already existed”. A welcome development.

Read Emel’s article on our blog @ https://bit.ly/2ILDg13

Google announcement @ https://blog.google/products/translate/reducing-gender-bias-google-translate/