Disentangling Proficiency in Programming from Proficiency in English: Codility meets cApStAn

by Pisana Ferrari – Branding and Social Media Manager

If the level of language proficiency of a skills assessment is too high, this may hamper the correct measuring of these very skills. For example, a skilled programmer may not pass a test due to the high threshold of English proficiency that is required in order to complete it. During our recent live webinar titled “Disentangling Proficiency in Programming from Proficiency in English: Codility meets cApStAn” Neil Morelli, Chief I-O Psychologist at Codility, and cApStAn co-founder Andrea Ferrari, talked about their recent collaboration on a project involving analysis and adjustment of the language proficiency level required to understand and perform Codility assessment tasks. Codility is the #1 rated recruitment platform for developers, helping world-class companies like Microsoft, Intel, and American Express to assess candidate programmers via skills-based coding tests.

cApStAn’s 3-step language proficiency analysis and adjustment process

Step 1: The first step consisted in the analysis of the level of reading comprehension in English that is currently required to take Codility programming tests. This included the following steps:

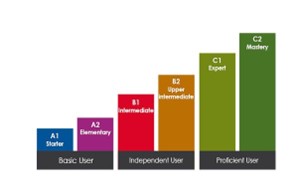

- A “difficulty analysis” of the test tasks, which was conducted with the aid of Text Inspector, a professional language analysis tool benchmarked to the CEFR (Common European Framework of Reference for Languages) levels, see image 1 below;

- Compilation of a list of technical terms that are essential to measure the skills. Once agreement was reached on these terms, they were excluded from the analysis;

- The Text Inspector outcome was a variable number of metrics used to compute overall score;

- cApStAn “engineered” its own overall score, a weighted average of 13 metrics, applied consistently.

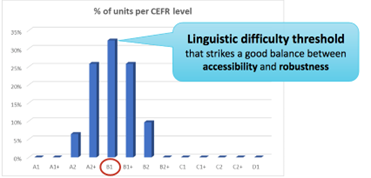

Step 2: The next step was to determine a reading comprehension threshold that would strike a good balance between accessibility for a global audience and robust test items. Based on the results of step 1 this was fixed at B1, see image 2 below.

Step 3: Finally, a review of the task descriptions of Codility assessments was conducted to stay as close as possible to the B1 threshold. This step included:

- Replacing difficult words/phrases with easier synonyms. The English Vocabulary Profile Online website is an excellent resource for checking the CEFR level of candidate replacements. All replacements were included in a term base, to facilitate systematic replacements also in future tasks;

- Breaking up long sentence into shorter ones, to improve readability (this may require some rewording);

- Adding a number of non-IT terms to the list of known words after inclusion of an added introductory explanation at the beginning.

How Codility used the output

- Existing Task Improvement

- Reviewed and edited tasks with recommended changes

- Internal content team used lessons to make edits to a large set of tasks

- Technical Documentation

- Adding study, findings, and follow-ups to our 45+ page Technical Manual

- Content Team Training

- Adding lessons and best practices from analysis to onboarding and training for new content team members

Future developments

- Periodic Task Reviews by Capstan

- Plans to review new task tranches at regular time intervals

- Incorporate Lessons into Task Development

- Lessons will inform improvements to the task development playbook and tools

- Focus on Translation R&D

- Plans to review translated content for equivalence

Want to try this out on your materials?

Select some sample items or sample questions, and request a free pilot analysis and adjustment of language proficiency at hermes@capstan.be