Update from CSDI Conference in Vienna

CSDI once again demonstrated—over an intensive three‑day programme—the importance and complexity of translation, adaptation, and linguistic nuance in producing comparable data for multinational studies. The 2026 Comparative Survey Design and Implementation (CSDI) Workshop, held in beautiful Vienna from March 23rd to 25th, brought together leading specialists in comparative methodology, with a strong emphasis on questionnaire translation, linguistic adaptation, and cross‑cultural measurement. Across sessions ranging from Advances in Translation and Questionnaire Design to Questionnaire Translation for Cultural Contexts, researchers examined how translation quality fundamentally shapes data comparability in international surveys. Presentations highlighted emerging practices such as integrating LLMs and persona‑based cognitive testing into 3MC appraisal, developing taxonomies of gendered language, and refining hybrid TRAPD workflows that blend technological innovation with established expert‑driven methods. Contributors also explored subtle translation errors and their effects on response behaviour, the evolution of key terms in languages such as US Spanish, and the creation of repositories for multilingual answer scales to support harmonised measurement across contexts.

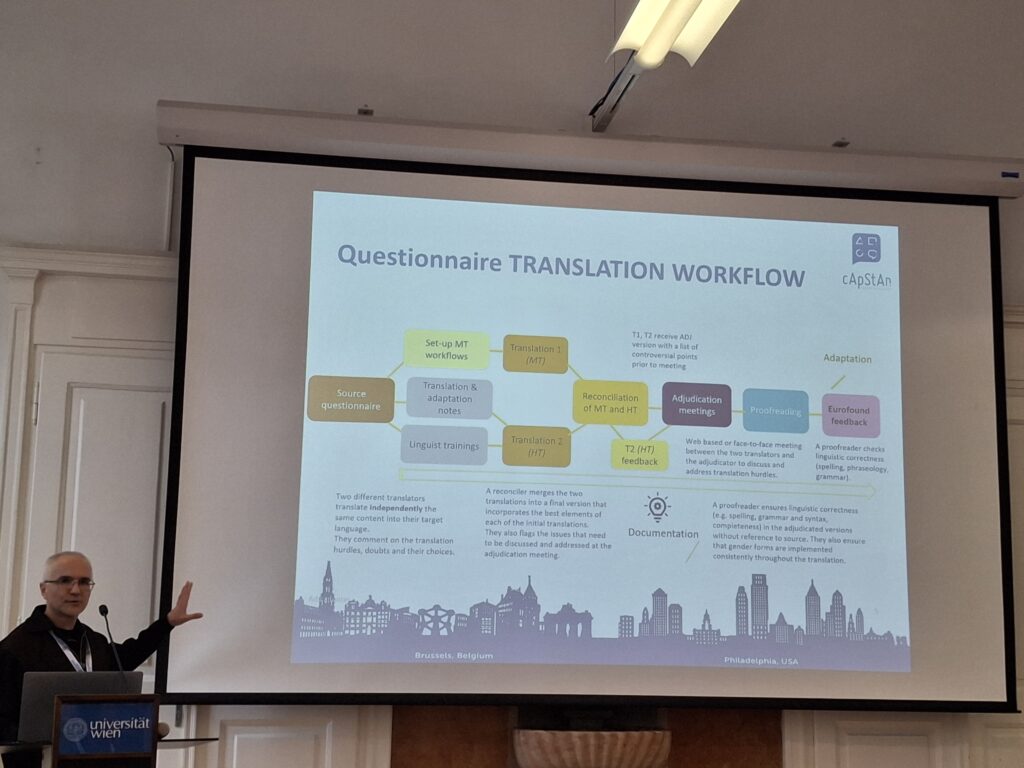

Our colleague Musab Hayatli contributed to the session Advances in Translation and Questionnaire Design, where he showcased cApStAn’s latest work on technological approaches to translation. His presentation, Integrating technological advances and established translation methodology: A hybrid approach to TRAPD, outlined how new tools—including LLM‑supported processes—can be responsibly incorporated into the trusted TRAPD framework, strengthening linguistic quality assurance while preserving expert judgement, contextual sensitivity, and cross‑cultural validity.

A highlight for cApStAn was the session Questionnaire Translation for Cultural Contexts, chaired by Dr Brita Dorer and co‑chaired by Musab, which brought together a diverse set of presentations addressing both methodological innovation and ongoing challenges in multilingual survey work. Alisú Schoua‑Glusberg (Research Support Services) examined the evolution of US Spanish terminology and showed how cognitive testing can help reassess translated terms in a shifting linguistic landscape. Patricia Goerman (U.S. Census Bureau) discussed how expert review methods—from traditional Question Appraisal Systems (QAS) to emerging generative‑AI‑supported approaches—can better guide translators and reviewers. Tobias Weber (GESIS), in collaboration with cApStAn, introduced efforts to build a multilingual repository of answer scales for the research community, while Ulrike Efu Nkong (GESIS) demonstrated how subtle machine‑generated translation errors can influence respondent behaviour. Dorothée Behr (GESIS) offered a lifecycle perspective in Questionnaire translations: From the cradle to the grave, and Brita Dorer presented work‑in‑progress comparing different translation approaches within a mixed‑methods design. Collectively, these contributions underscored the value of shared resources, methodological transparency, and collaborative innovation in improving comparability and reducing duplication of effort in large‑scale survey research.